AI and mass public health atrocities

AI: friend or foe of human rights abuses? A case study

By J. Steven Bromwich

Targets of public health

Between 1965 and 1975 Native American women of child-bearing age were targeted for mass sterilization by federal and local public officials, with the Indian Health Service (IHS) as the central agency involved. They had the cooperation of many local doctors and hospitals. For example, just between 1973 and 1976, 3,406 Native American women were sterilized by IHS facilities. (Government Accountability Office 1976 investigation).

Why target these women? Some scholars argue that federal officials sought sterilization to reduce poverty and long-term dependency. (Lawrence, Jane. 2000. “The Indian Health Service and the Sterilization of Native American Women,” American Indian Quarterly 24(3): 400–419).

The sterilization of Native American women was the continuation of the eugenics movement of the previous 50 years, a movement that some argue continues today. The eugenics movement sought to “improve” the population, the gene pool, if you will, by reducing “less desirable” humans while increasing those who had more desirable traits. (Stern, Alexandra Minna. Eugenic Nation, 2005).

State laws around the nation, beginning with Indiana in 1907, began forced and coerced sterilization as one means of achieving these public health goals. Federal sterilization abuses led to new consent regulations in 1978 that legally ended widespread coercive sterilization practices. (HEW sterilization regulations, 1978).

Estimates vary widely; some activists and researchers suggested rates as high as 25–50% of child-bearing age women were sterilized without consent, either through coercion or without their knowledge during other procedures.

AI could make eugenics programs more efficient and devastating

AI acts as a force multiplier for the intent of its users. AI is not a moral agent. It augments the moral agency of those who design and deploy it.

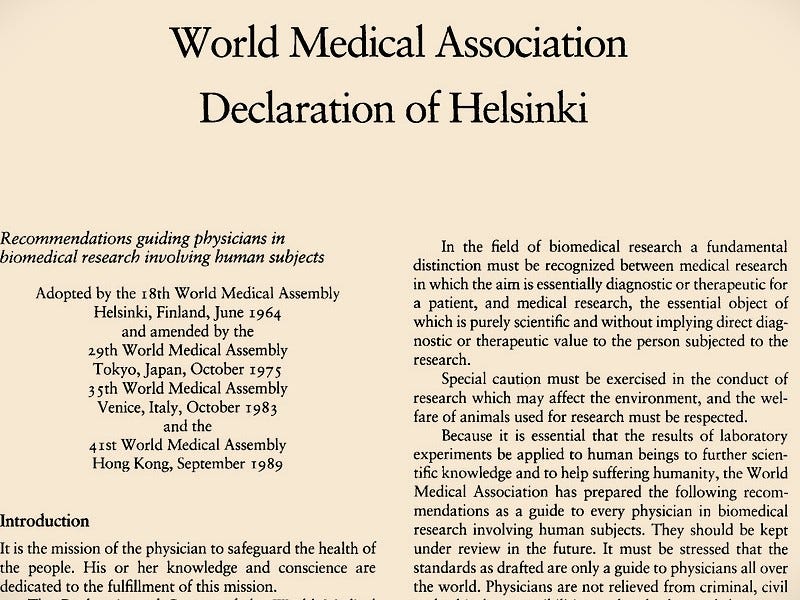

The intent of many public health officials in the 1960s and 1970s was unethical, violating well-established medical consent standards and targeting a vulnerable population despite contemporaneous objections and regulatory requirements. By the time these sterilizations were occurring, the principles of voluntary informed consent had already been firmly established in international medical ethics through the Nuremberg Code and the 1964 Declaration of Helsinki.

If public health officials in 1965 had access to AI tools how might the eugenics program against these women have been different? I argue it would have been more efficient and more devastating for the women and communities being targeted because those utilizing such tools were already violating established ethical and regulatory norms intended to protect the rights of these women.

Predictive targeting: AI algorithms

AI algorithms could have been used to scan medical records and census data to flag women who met specific socio-economic criteria (e.g., those with multiple children or receiving federal aid). This would have significantly scaled the eugenics process.

The 1970 Family Planning Services and Population Research Act (Title X) expanded federal funding for family planning services, including sterilization, which increased access to the procedure through Medicaid and IHS. Federal reimbursement made sterilization financially feasible for providers and institutions. In the context of Native American women and eugenics, this could have incentivized providers and health facilities to perform more sterilizations.

Algorithmic bias and dehumanization

Data in the 1960s was often filtered through a lens of poverty as a pathology. The intellectual foundation underpinning this lens was the “Culture of Poverty” theory, popularized by anthropologist Oscar Lewis in his 1961 book The Children of Sánchez. The theory effectively blamed poor people and poor families for poverty. Through this lens poverty is a moral and hereditary failure. (Lewis, O. 1966. The Culture of Poverty. Scientific American, 215(4), 19-25).

An AI model trained on such biased data and supported by this underlying belief-system would have scored Native American communities as high-cost or unfit. With the “scientific” evidence justifying forced sterilization as a cost-saving measure for the government and a good for society, an AI governance team would likely give the model a green light for deployment.

“The common good” is language that repeatedly emerges to justify unethical practices. This language suggests that it is ethical, even obligatory, to sacrifice the rights and health of certain individuals, even entire communities, for the good of the larger society.

Why waste resources on the hopeless poor, the reasoning goes, when that money can be spent on programs for people who will be productive members of society? If we must sterilize thousands of women for the good of millions, then so be it. The “common good,” otherwise known as utilitarian ethics, is not a relic of the past, it is a staple of ethical reasoning today.

The efficiency algorithm

Federal agencies like HEW (Health, Education, and Welfare) could have used predictive modeling to estimate long-term fiscal burdens associated with poverty. AI would have generated a “Sterilization Priority List,” identifying women for forced sterilization based on their projected cost to taxpayers.

Federal funds could then be used to reward those who meet and exceed performance targets and punish those who do not. The coerced sterilization of Native American women could then have become an optimized business objective.

Value-based eugenics

In contemporary healthcare, “value-based care” is meant to communicate the prioritization of patient outcomes, but it often translates to minimizing high-cost patients. (My previous article on Predictive Mortality Tools highlights one method used to minimize costs).

AI excels at risk stratification. In this case study’s overlay, the AI would identify Native American women not just by race, but by predicted lifetime cost. If the algorithm determines that a specific demographic has a high probability of chronic illness (often due to poverty), it will flag sterilization as the most cost-effective clinical intervention to prevent future high-cost dependents.

If a private healthcare contractor managed IHS facilities, their AI would optimize for “throughput.” Short procedural interventions are typically reimbursed more per encounter than longitudinal primary care. The AI would create productivity dashboards for doctors, pushing them to “convert” patients to permanent procedures to meet quarterly financial targets.

The ethics of AI depends on the ethics of humans

This article has highlighted how artificial intelligence would have likely amplified the unethical sterilization practices carried out against Native American women in the 1960s and 1970s. AI does not manufacture injustice, but it can scale the intentions of those who design and deploy it. AI can transform ethical violations into efficient and systematized abuses.

Although AI might have intensified these abuses, transparent systems and auditable data could also have helped whistleblowers, journalists, and investigators identify, quantify, and challenge the abuses earlier and more efficiently. This is dependent, however, on data and intent being transparent and accessible for scrutiny.

Those who design, implement, and oversee AI systems in healthcare and public policy must be grounded in medical and research ethics. Models optimized solely for efficiency, cost reduction, or productivity risk committing new historical injustices. Behind every dataset, prediction, and performance metric is a patient, a human being, whose dignity and rights must remain central to developers, caregivers and institutions.

About the author

J. Steven Bromwich is an RN, clinical ethicist, and investigator. With a background spanning bedside care, bioethics, and criminal investigation, he founded The Standard of Care Report to bring the dignity of patients and the vocation of caregiving to the foreground amid AI advances and the systemic challenges in healthcare.

As you clearly show how AI could have been used to more efficiently target Native American women, I do wonder if it is being used for such unethical purposes as I write this? I assume, unfortunately, the answe is yes.