AI, Prognosis, and Patient Autonomy

Autonomy and the AI hospital

Artificial intelligence in healthcare must be evaluated in light of fundamental principles about the human person. Among these, autonomy and informed consent are central.

A case study

Although fictional, this scenario is clinically routine in American ICUs. For this reason, you may recognize it. Neither the names nor the conditions are from a real patient.

Susan, a 73-year-old married woman, suffered a hemorrhagic stroke. She has been in the ICU for 5 days. Her husband, Joe, and two of her three children are present, praying and hoping she will recover.

The prognosis for hemorrhagic stroke is usually poor. There have been good signs in Susan’s case, however. Even though Susan is on the ventilator, she has begun “breathing over the vent,” which means she is breathing in a limited way on her own. Susan has started responding to stimuli.

The entire process is overwhelming for the family because it was so unexpected. Susan was healthy and active before the stroke.

The medical team arrives in Susan’s room. The neurologist tells the family that continuing medical treatment for the stroke is futile, and it is time to think about keeping her comfortable. He then introduces the topic of palliative care, but the family does not hear anything past “futile.”

Susan’s husband, Joe, asks, “Why? What has happened? She seems to be making progress.” This seems so sudden.

The doctor replies that her lab values, scans, and other metrics indicate no improvement and, in fact, may indicate she is declining. He then suggests making her a DNR (Do Not Resuscitate). He also says palliative care will be in to discuss options with the family.

Mortality prediction tools are AI tools built into the electronic medical record. These tools “read” the patient’s medical data. They are frequently intended to prompt earlier goals-of-care conversations. Most clinicians use them thoughtfully and with good intentions. It is the experience of many clinicians in critical care that patients may suffer longer than necessary when the burdens caused by treatments outweigh the benefits.

The family is overwhelmed with grief and confusion. A short time later, the palliative care team arrives. They express care for the patient and family. They, too, explain that Susan’s care appears futile and explain what “comfort care” would mean for Susan.

Of data, doctors, and algorithms

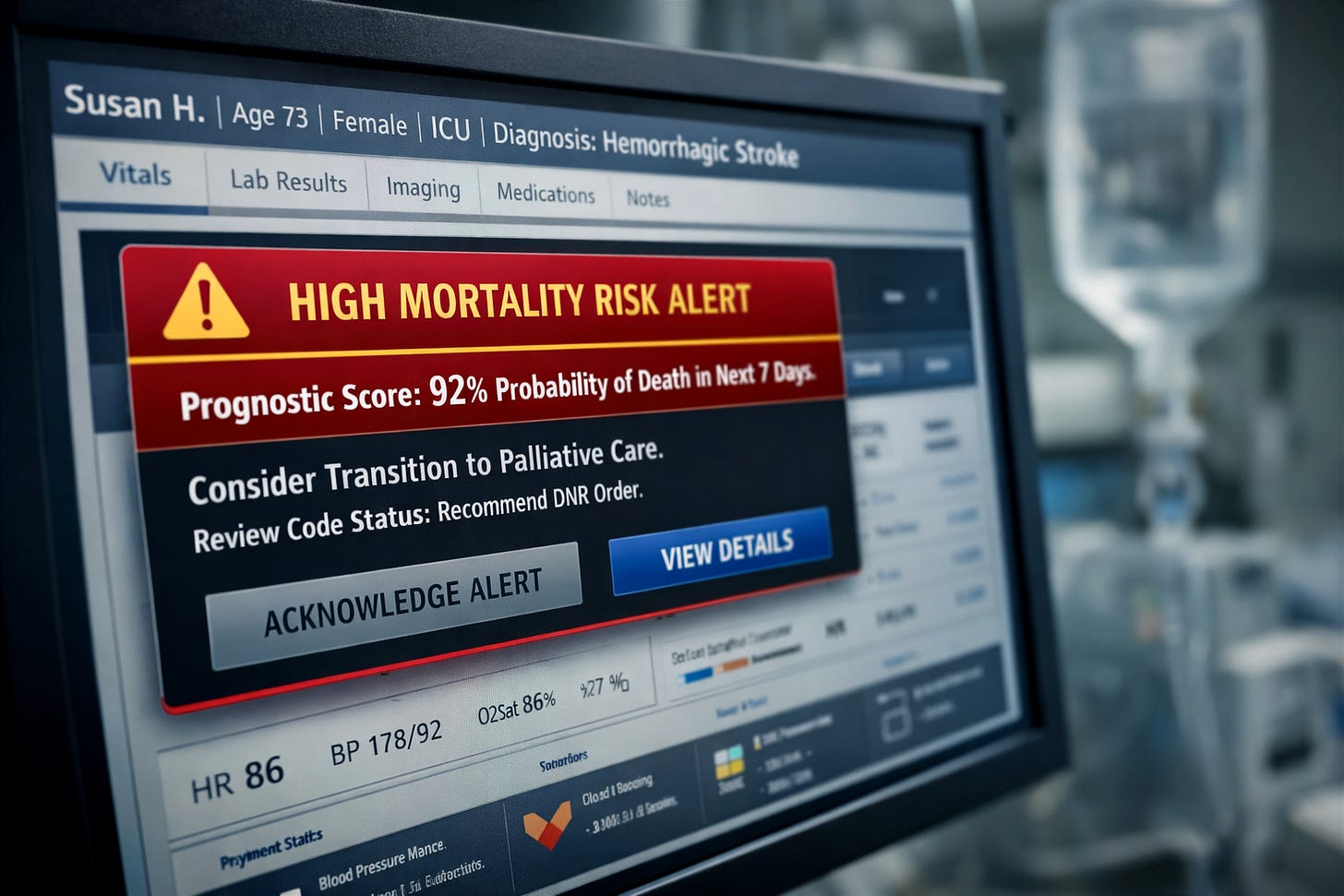

On day five in the ICU an alert appeared in the corner of Susan’s electronic medical record (EMR). These alerts are based on what is sometimes referred to as a predictive mortality score or a prognostic score. They tell the medical team if a patient has a high chance of mortality in the next x amount of time (30 days, for example). Susan’s score indicates she has a high probability of death in the near term. The alert did not make the decision, but it shaped the frame through which the decision was discussed by the medical team.

The mortality prediction alert goes to the attending physician, the palliative care team, and others who need to know. The alert, if the mortality score is high, may also prompt the physician to change the plan of care.

The AI “learns” from large datasets of prior patients with similar diagnoses and clinical features. Using supervised learning methods, the model analyzes patterns between physiological variables and outcomes such as 30-day mortality.

It does not simply look for a small group of identical patients and calculate a percentage. Rather, it identifies statistical relationships across thousands of cases and applies those learned patterns to the current patient. Based on how Susan’s lab values, imaging, vital signs, age, and diagnoses align with those patterns, the system may generate a prediction, for example, a 90% estimated probability of death within 30 days.

This is a probabilistic forecast, not a certainty, and it depends heavily on the quality and representativeness of the data on which the model was trained.

Hospitals are expected to validate these tools against their own patient populations to improve accuracy and mitigate bias. Even well-validated models can suffer calibration drift when deployed in new clinical settings, meaning their predicted mortality risk may systematically overestimate or underestimate real-world outcomes.

Based on these data, the algorithm generates a mortality prediction for Susan and every other patient in the ICU. While datasets can be audited for bias, the models themselves are often proprietary, limiting transparency for both clinicians and patients. Even when the model is not proprietary, clinicians may not understand how it produces its estimate and therefore may be unable to explain it to families. This lack of explainability is not merely a technical limitation; it is an ethical concern.

Patient autonomy and the new paternalism

Medicine has a long history of paternalism. In recent decades it has been largely addressed and overcome. Paternalism essentially says, “I am the doctor and I know what’s best for you. You do not need to know the details of your illness or the treatments.” One especially troubling feature of this paternalism was the withholding of a terminal diagnosis from patients for “their own good.”

While autonomy is intrinsic to the human person, its central place in modern medical ethics developed in response to this history. Patients have a right to know the nature of their illness, the risks and benefits of proposed treatments, and the alternatives available to them, subject to rare exceptions. Over time, this commitment has reshaped medical practice: patients can access their medical records, seek second opinions, and participate meaningfully in decisions about their care.

When a prognostic recommendation originates in a model the patient cannot examine, and the clinician cannot explain, autonomy becomes procedural rather than substantive. The authority of the physician risks becoming derivative, borrowed from an opaque statistical system rather than grounded in clinical judgment.

These concerns intensify if a physician feels pressure, from institutional quality metrics, documentation expectations, or liability concern, to act on the AI prompt embedded in the medical record.

Restoring autonomy in the AI hospital

In moments like this, preparation matters. Difficult questions should be discussed in advance as a family and, when possible, written down before crisis arrives. Asking questions of the medical team is not confrontational; it is an exercise of informed consent.

“What information brought you to the conclusion that mom’s care is futile, especially considering the positive signs we have witnessed?” “Did an AI alert prompt you to recommend this new course of care?” “How did the AI arrive at that conclusion?” “Do you agree with it?” “Are there alternatives?”

Overcoming algorithm-mediated clinical authority requires making these systems explainable and accountable for the decisions they prompt. Clinical judgment must remain primary, not derivative.

To maintain robust patient autonomy and informed consent, AI alerts must be explainable. If patients are unaware that these systems are shaping recommendations behind the scenes, they cannot ask the right questions or meaningfully participate in decisions about their care. Autonomy will erode one alert at a time.

About the author

J. Steven Bromwich is an RN, clinical ethicist, and investigator. With a background spanning bedside care, bioethics, and criminal investigation, he founded The Standard of Care Report to bring the dignity of patients and the vocation of caregiving to the foreground amid AI advances and the systemic challenges in healthcare.

I think most patients and their families would be surprised of these AI alerts.